Artificial Intelligence (AI) chips have become foundational to computing, powering everything from large-scale language models to real-time edge processing. These purpose-built processors are reshaping data centers, consumer electronics, autonomous systems, and industrial automation. AI chips are central to competitive advantage in sectors like healthcare, automotive, and cloud services. This article provides a detailed statistical deep dive into the current state and future outlook of AI chips across technologies, markets, and applications. Let’s explore how the numbers define the future of intelligent silicon.

Editor’s Choice

- The global AI chip market is projected to reach $121.7 billion in 2026, growing at a CAGR of 27.9% through 2035.

- GPUs maintain the largest market share, dominating AI model training workloads and accounting for nearly 46.5% of AI chip usage.

- Edge AI chip shipments are forecast to reach $221.5 billion by 2032, driven by growing demand for on-device inference.

- NVIDIA remains the top AI chip vendor, controlling over 90% of the data center GPU segment.

- AI chip integration in smartphones and laptops has surpassed 55% penetration in new devices in 2026.

- China and the U.S. continue to lead in AI chip R&D and adoption, with North America holding over 43% of the market share.

- Inference workloads are rapidly outpacing training in growth, with CAGR exceeding 38% through 2030.

Recent Developments

- NVIDIA launched its H200 Tensor Core GPU in late 2025, optimized for large language model inference and training.

- AMD released its MI300X AI accelerator, positioning itself as a challenger in the generative AI compute market.

- Google introduced its TPU v5e, designed to support flexible scaling across small and large AI workloads.

- Qualcomm unveiled its AI200 series for edge devices, offering low-power, high-performance inference capabilities.

- OpenAI announced custom AI inference silicon under development, suggesting a trend toward vertically integrated hardware stacks.

- Microsoft is reportedly working on in-house AI chips, reducing dependency on third-party providers for Azure workloads.

- Samsung has started mass production of HBM3E memory chips, essential for supporting high-throughput AI processors.

- Intel launched Gaudi 3, aimed at undercutting NVIDIA’s dominance in training environments.

- TSMC increased AI chip manufacturing capacity by over 25%, responding to explosive demand across global markets.

- Several startups, including Cerebras and Tenstorrent, expanded deployment of wafer-scale and domain-specific architectures.

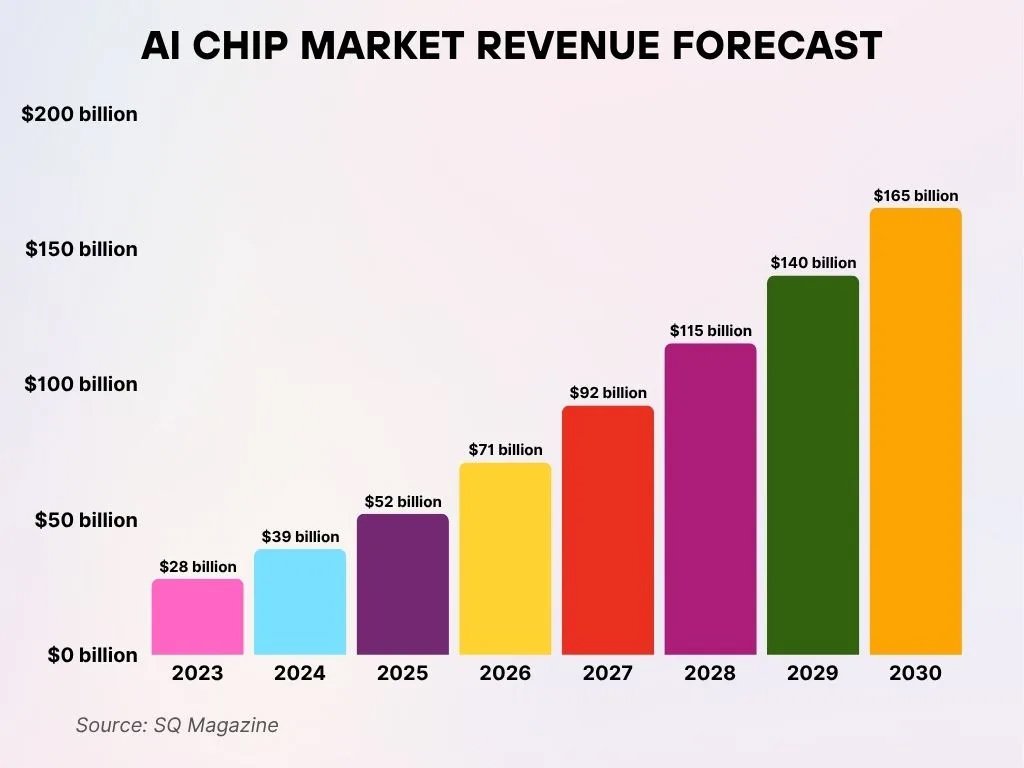

AI Chip Market Revenue Growth Outlook

- The global AI chip market is poised for significant expansion, with total revenue expected to reach $165 billion by 2030, reflecting strong long-term adoption and demand.

- In 2023, the market was valued at $28 billion, underscoring early-stage growth momentum and rising enterprise investment in AI hardware.

- By 2025, market revenue is forecast to nearly double to $52 billion, signaling a rapid acceleration in AI chip deployment across industries.

- The industry is projected to surpass the $100 billion milestone in 2028, with total market revenue climbing to $115 billion.

- Between 2029 and 2030, the AI chip sector is expected to add $25 billion, highlighting sustained investor confidence and expanding use cases.

- Overall, the period from 2023 to 2030 represents an approximate 6x increase in market size, emphasizing AI’s explosive demand, deeper technology integration, and long-term growth potential.

Global Market Overview for AI Chip Technology

- The global AI chip market size was valued at $76.8 billion in 2024 and is expected to hit $121.7 billion in 2026.

- North America dominates the market, led by U.S.-based chipmakers and hyperscale cloud providers.

- Asia-Pacific is the fastest-growing region, with China investing heavily in domestic AI chip R&D and production.

- Europe’s AI chip market is driven by automotive and industrial applications, especially in Germany and the Nordics.

- Edge AI is a major growth area, forecast to reach $58.9 billion by 2030, significantly expanding outside the cloud.

- Cloud-based AI chips remain the largest deployment category, especially for training large models.

- The automotive sector is seeing rapid growth in AI chip usage, especially for ADAS and autonomous driving.

- Healthcare AI chip integration is increasing through diagnostic imaging and on-device analysis tools.

- AI chip revenue from consumer electronics (smartphones, laptops) is set to surpass $30 billion by 2027.

- Specialized ASICs and SoCs are gaining popularity for use in AI wearables and IoT devices.

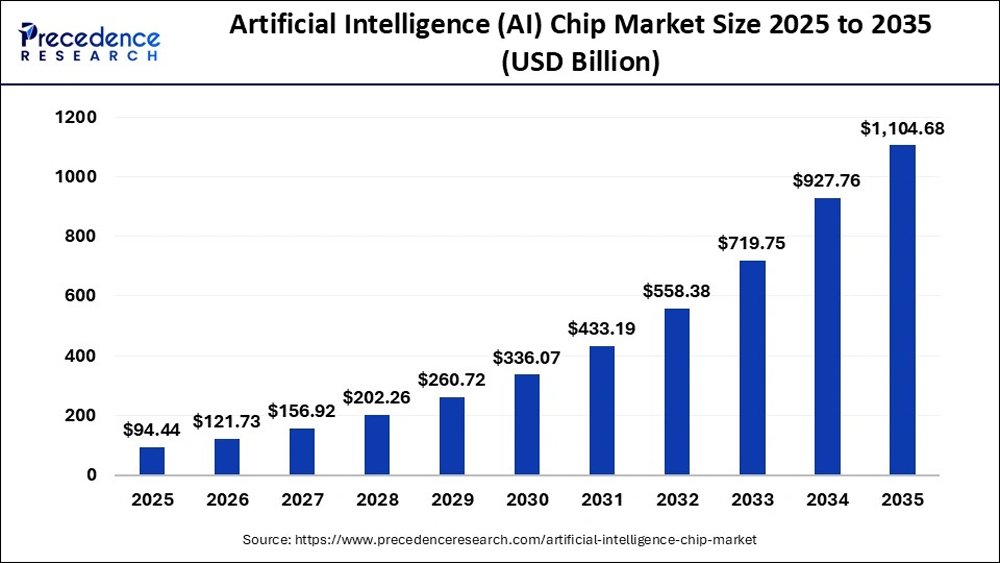

AI Chip Market Size Growth Outlook (2025–2035)

- The global AI chip market is valued at $94.44 billion in 2025, reflecting the early-stage acceleration of AI-driven semiconductor adoption.

- Market size increases to $121.73 billion in 2026, indicating steady year-over-year growth fueled by rising AI workloads.

- In 2027, the AI chip market will reach $156.92 billion, supported by expanding cloud AI and enterprise deployments.

- The market crosses $202.26 billion in 2028, highlighting strong commercial and industrial AI adoption.

- By 2029, AI chip revenues grow to $260.72 billion, driven by data centers, edge AI, and advanced model training needs.

- The industry surpasses $336.07 billion in 2030, marking a major scaling phase for AI hardware infrastructure.

- In 2031, the market climbs to $433.19 billion, reflecting the rapid expansion of generative AI and high-performance computing.

- The AI chip market exceeds $558.38 billion in 2032, signaling mainstream AI integration across industries.

- Growth accelerates sharply in 2033, with market value reaching $719.75 billion amid massive investments in AI compute capacity.

- The market approaches the trillion-dollar threshold in 2034, surging to $927.76 billion.

- By 2035, the global AI chip market is projected to reach $1,104.68 billion, underscoring AI chips as a core pillar of the digital economy.

Application Area Statistics for AI Chip Deployments

- Cloud deployments account for the largest share of AI processing workloads globally in 2026.

- Edge AI chip usage is expanding rapidly, with the edge segment projected to exceed $220 billion by the early 2030s.

- Inference functions dominate AI chip deployments, representing more than half of all AI workloads.

- On-device AI capability appears in nearly 55% of new laptops and smartphones released in 2026.

- Industrial automation uses AI chips for predictive maintenance and robotics, growing at over 20% annually.

- Retail and logistics applications report efficiency gains of approximately 15% from AI chip deployments.

- Telecom providers deploy AI chips in network equipment, improving throughput by roughly 30%.

- AI-powered surveillance systems expanded installations by over 20% year over year.

- Healthcare imaging platforms using AI accelerators reduced processing latency by up to 45%.

- Financial services workloads using AI chips achieved execution speed improvements near 18%.

Role of AI Chip Hardware in Data Centers and Cloud Infrastructure

- AI-driven data center deployments are expanding at an annual rate of approximately 14% through 2030.

- AI workloads account for nearly 25% of total data center compute activity in 2026.

- Hyperscale providers continue double-digit annual increases in AI hardware spending.

- High-bandwidth memory demand linked to AI chips is driving equipment sales growth of about 9%.

- Inference workloads in data centers are expected to surpass training workloads by 2027.

- Multi-chip AI server architectures improve power efficiency by roughly 20%.

- Combined GPU, ASIC, and TPU deployments enhance performance per watt by nearly 35%.

- Hybrid cloud architectures integrating AI chips grow at close to 30% annually.

- AI chip density per server rack has increased by approximately 40% over three years.

- Cloud infrastructure remains the primary environment for large-scale AI model training.

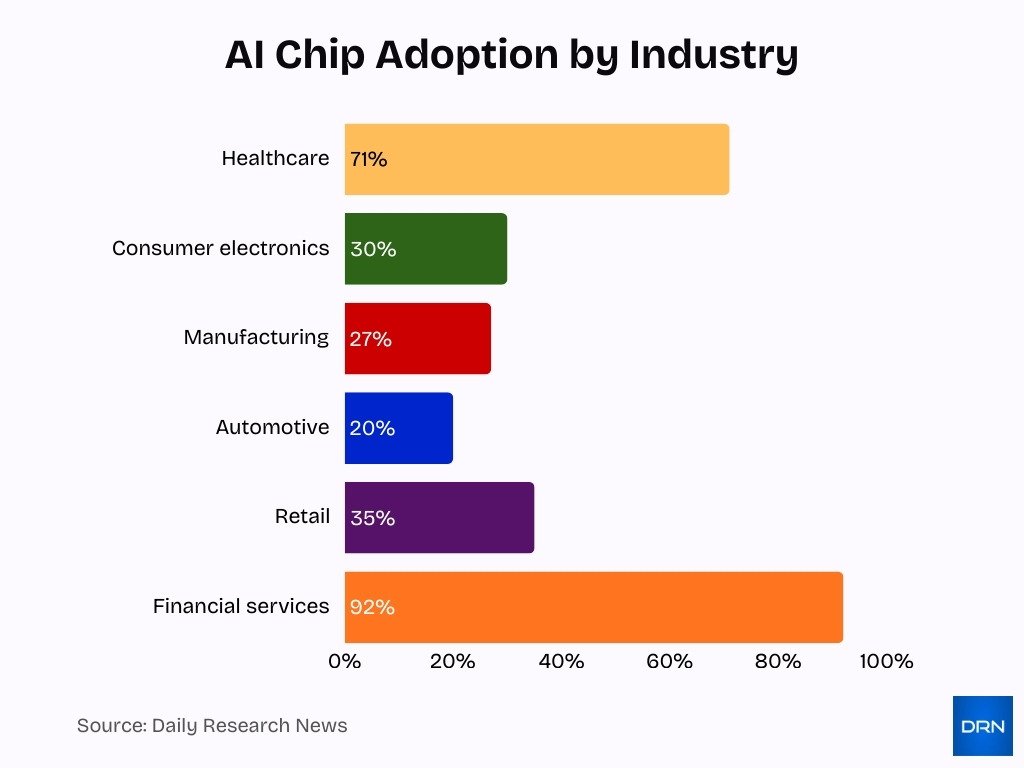

Adoption Trends Across Industries for AI Chip Integration

- Healthcare leads with 71% of organizations using generative AI powered by AI chips for diagnostics and imaging in 2025.

- Automotive AI chipset market reaches $4.2 billion in 2025, driven by ADAS and autonomous vehicles.

- Consumer electronics holds 30% share of the AI chipsets market through smartphones and wearables.

- AI in the medical imaging market grows from $14.78 billion in 2025 for diagnostics and personalized medicine.

- Manufacturing sees 27% reduction in unplanned downtime via AI chips in predictive maintenance.

- Automotive AI chipsets projected to grow at 20% CAGR for electric and self-driving cars.

- Retail AI recommendation engines boost sales by 35% using AI chips for customer insights.

- China deploys 4.25 million 5G base stations with AI chips for telecom optimization.

- Financial services leverage AI chips, where 92% of banks use AI for fraud detection.

Growth of Edge Market and On-Device AI Chip Processing

- The global edge AI hardware market is projected to reach $58.9 billion by 2030.

- Edge AI chip demand in automotive sensors and robotics grows at roughly 30% per year.

- On-device AI chip shipments doubled between 2024 and 2026.

- Edge AI memory components are expected to triple in value over the next decade.

- North America holds about 40% of the edge AI market, with Asia-Pacific growing fastest.

- Edge AI accelerators reduce latency by approximately 50% compared to cloud processing.

- IoT device growth, projected to exceed 20 billion connected units, fuels edge AI adoption.

- Manufacturing productivity linked to edge AI rises at over 20% CAGR.

- Edge AI supports privacy-centric processing by minimizing data transfer.

- Energy-efficient edge chips extend battery life across consumer and industrial devices.

Use of AI Chip Systems in Automotive and Autonomous Vehicles

- Automotive AI chip demand is growing at approximately 30% CAGR through the late 2020s.

- Advanced driver-assistance systems integrate AI chips into more than 50 million vehicles by 2026.

- AI chips process multi-sensor data with sub-10-millisecond latency for safety-critical tasks.

- Predictive maintenance powered by AI chips reduces vehicle downtime by roughly 18%.

- Software-defined vehicles reliant on AI chips are expected to reach 15 million units annually by 2028.

- Autonomous freight and logistics pilots increasingly rely on AI inference at the edge.

- AI vision chips improve object detection accuracy by approximately 22%.

- Local AI processing reduces reliance on continuous cloud connectivity.

- AI-enabled sensor fusion enhances high-definition mapping capabilities.

- Automotive OEMs continue to increase in-house AI silicon development.

Healthcare Innovations Using AI Chip Modules

- AI chips in medical imaging reduce diagnostic processing time by up to 40%.

- Hospitals deploying edge AI devices report patient throughput improvements near 15%.

- Genomics platforms using AI accelerators cut analysis time by more than 25%.

- Wearable devices with AI chips enable near real-time biometric monitoring.

- Remote diagnostics powered by AI chips expand access by approximately 20%.

- AI-driven resource optimization improves hospital cost efficiency by about 12%.

- Drug discovery platforms using AI chips accelerate candidate screening by 35%.

- Telemedicine devices with embedded AI processors grew adoption by nearly 30% year over year.

- Medical AI chip applications are projected to form a multi-billion-dollar market by 2030.

- Security features integrated into AI chips enhance the protection of sensitive health data.

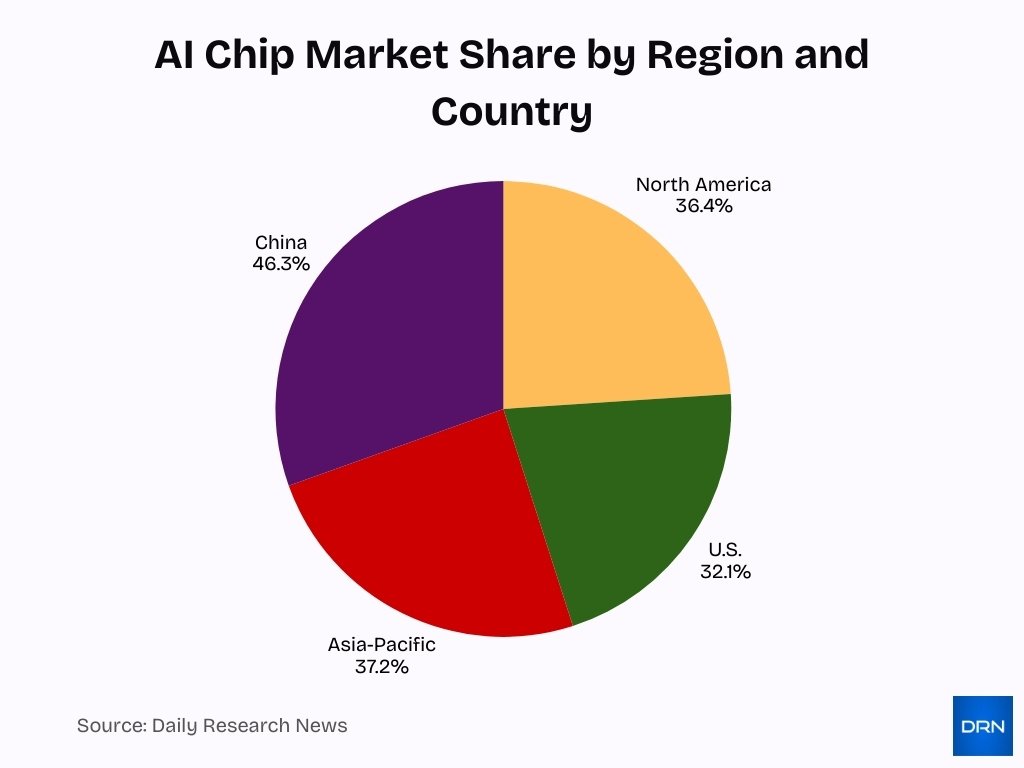

Market Share by Region and Country in the AI Chip Segment

- North America holds 36.4% of the global AI chip market share in 2025.

- U.S. dominates with 32.1% regional AI chip market share driven by R&D investments.

- Asia-Pacific commands 37.2% of the AI chips market in 2025.

- China captures 46.3% of the Asia-Pacific AI chipsets revenue in 2024.

- North America AI chipsets are valued at $52.15 billion in 2025.

- Europe projects AI chips revenue of US$44.63 billion in 2025.

- North America leads with $86.33 billion in the AI chip market size for 2024.

- Asia-Pacific AI chip market reaches $4.96 billion in 2024.

Integration of AI Chip Components in Consumer Electronics and IoT

- AI chip integration in smartphones and laptops surpassed 55% adoption in 2026.

- IoT devices with on-device intelligence increased shipments by roughly 30% in one year.

- Smart home devices using AI chips reduce energy consumption by approximately 18%.

- Wearables equipped with AI accelerators drive revenue growth near 22% annually.

- AI-powered AR and VR headsets improve interaction latency by about 30%.

- Consumer robots with embedded AI chips increase task accuracy by roughly 25%.

- Connected vehicle infotainment systems rely on AI chips for personalization.

- Smart appliances using AI chips reduce maintenance costs by about 15%.

- Consumer drones improve obstacle avoidance performance by nearly 40%.

- On-device AI processing enhances privacy by limiting cloud data transfers.

Regional Market Breakdown for AI Chip Sales

- North America leads global AI chip sales with over 43% market share.

- The United States dominates regional demand through cloud, defense, and enterprise adoption.

- Asia-Pacific represents the fastest-growing region, driven by China, Japan, and South Korea.

- Europe’s AI chip market benefits from automotive and industrial automation investment.

- China emphasizes domestic AI chip production for inference and edge use cases.

- Japan focuses on AI chips for robotics and advanced manufacturing.

- South Korea leverages AI chips across consumer electronics and memory technologies.

- Emerging regions invest in AI infrastructure for smart cities and connectivity.

- Government incentives strongly influence regional AI chip adoption patterns.

- Regional diversity reflects differences in infrastructure maturity and policy support.

Technology Comparison by AI Chip Type, GPU, TPU, FPGA, ASIC, SoC

- GPUs account for approximately 46.5% of AI chip usage, dominating training workloads.

- TPUs represent about 13% of market share, optimized for matrix-heavy operations.

- ASICs are projected to capture up to 40% share due to efficiency advantages.

- FPGAs contribute flexibility, generating several billion dollars in annual revenue.

- Hybrid CPU-NPU designs grow at over 20% year over year.

- ASICs outperform GPUs in power efficiency for inference tasks.

- SoCs dominate edge and mobile AI deployments due to integration benefits.

- GPUs remain preferred for large-scale training environments.

- Neuromorphic chips remain niche but signal long-term innovation.

- Chip selection depends heavily on workload, latency, and power constraints.

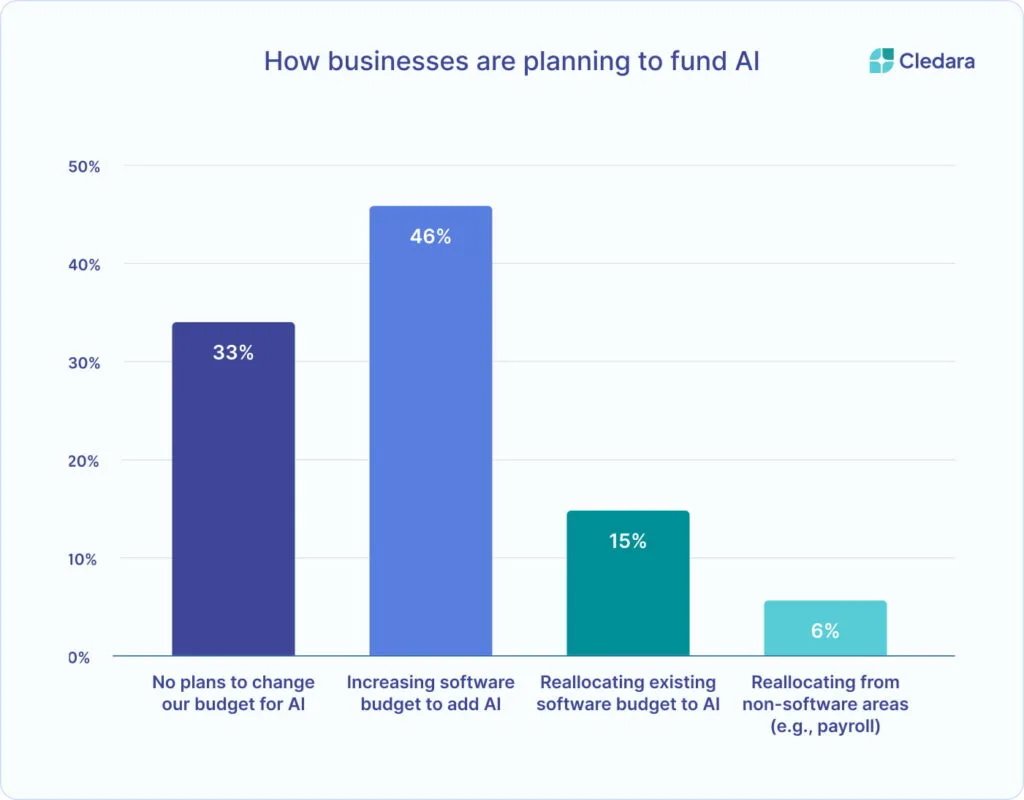

Business Strategies for Financing AI Investments

- 46% of businesses are boosting their software budgets with the explicit goal of adding AI capabilities to their existing technology stack.

- 33% of organizations report no intention to modify their current budgets when it comes to AI-related investments.

- 15% of companies are reallocating their existing software budgets to support AI adoption initiatives.

- 6% of firms plan to redirect funds from non-software areas, such as payroll, to finance AI efforts.

Deployment Statistics for Training vs Inference AI Chip Operations

- Training workloads represented roughly 60% of AI chip usage in 2024.

- Inference workloads grow faster, exceeding 38% CAGR through 2030.

- Data centers prioritize training chips for model development.

- Edge devices focus primarily on inference due to efficiency requirements.

- Training chips require higher performance and higher cost architectures.

- Inference-optimized silicon emphasizes power efficiency and scalability.

- Enterprises deploy inference chips for analytics, security, and automation.

- Hybrid environments combine training and inference clusters.

- Next-generation platforms reduce training resource requirements significantly.

- Deployment strategies increasingly balance cost and performance needs.

Workload Distribution Between Cloud and Edge AI Chip Platforms

- Cloud platforms handle the majority of large-scale AI workloads.

- Edge deployments grow rapidly for latency-sensitive applications.

- Cloud data centers account for approximately 64% of AI chip usage.

- Edge platforms grow at CAGRs exceeding 40% for inference tasks.

- Edge AI reduces dependency on constant connectivity.

- Hybrid cloud-edge architectures optimize cost and responsiveness.

- Consumer devices represent a major share of edge AI workloads.

- Cloud environments dominate large-model training and centralized inference.

- Federated learning distributes workloads across the cloud and the edge.

- Balanced architectures improve scalability and resilience.

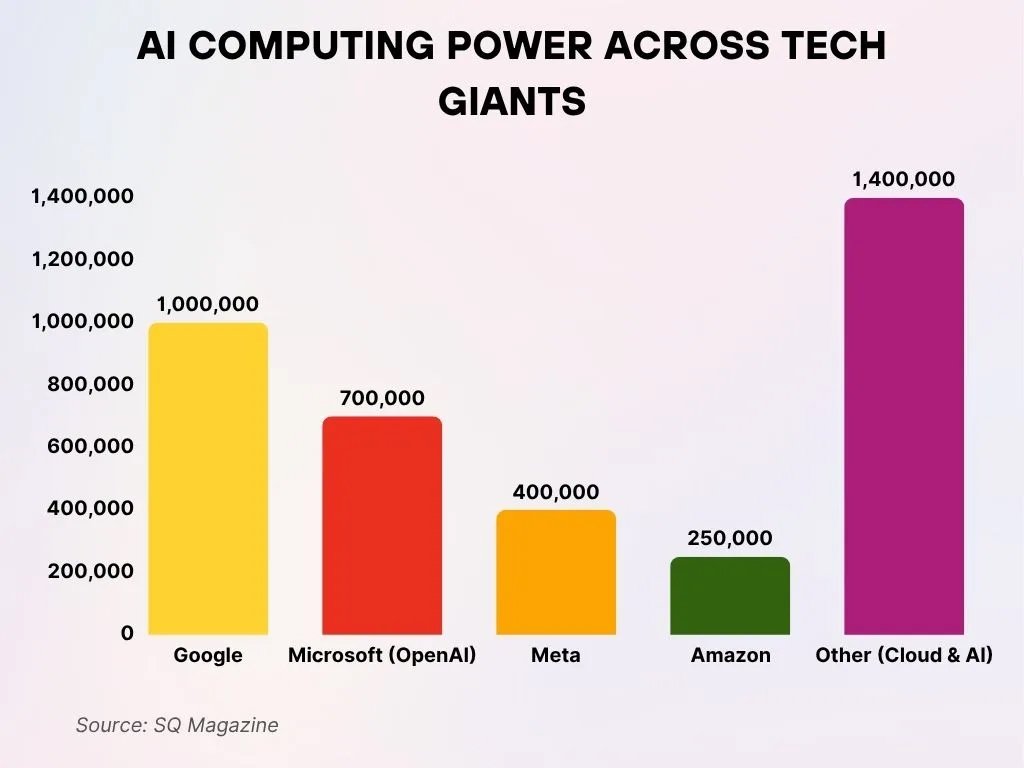

AI Compute Capacity Among Leading Tech Companies

- Google remains at the forefront with a combined total of 1 million H100 equivalents, consisting of 400,000 NVIDIA GPUs and 600,000 TPUs, underscoring its diversified and large-scale AI computing infrastructure.

- Microsoft, including its strategic partnership with OpenAI, commands approximately 700,000 H100 equivalents, all sourced from NVIDIA, reflecting a heavy reliance on high-performance GPU-based AI compute.

- Meta trails closely behind with 400,000 NVIDIA H100 equivalents, emphasizing its continued investment in robust and scalable AI infrastructure to support advanced model development.

- Amazon sustains an overall AI computing capacity of 250,000 H100 equivalents, built entirely on NVIDIA GPUs, highlighting its focus on GPU-driven cloud AI workloads.

- The “Other” category, covering multiple cloud providers and independent AI labs, leads collectively with 1.4 million H100 equivalents, demonstrating substantial AI investment levels beyond the largest technology firms.

Funding and Investment Trends in AI Chip Startups

- AI chip startups raised $6.2 billion in U.S. venture funding in 2025, marking an 85% year-over-year increase.

- Cerebras Systems secured the largest round at $1.1 billion Series G for AI chips in September 2025.

- PsiQuantum raised $1 billion in Series E funding for quantum photonic chipsets in September 2025.

- Groq obtained $750 million in Series E funding before its $20 billion acquisition by Nvidia.

- Celestial AI attracted $250 million Series C at a $2.5 billion valuation for optical interconnects.

- Lightmatter closed $400 million Series D at $4.4B valuation for photonic AI chiplets.

- Edge AI chip startups raised over $5.1 billion in VC in H1 2025 amid IoT growth.

- NXP acquired edge AI chip firm Kinara for $307 million in 2025.

- AI chips & hardware startups garnered $2.5 billion globally in 2025, up 89% YoY.

- India allocated Rs 2,500 crore (~$300 million) to semiconductor and AI silicon projects in 2025.

Performance and Efficiency Benchmarks for AI Chip Architectures

- Next-generation AI platforms aim to reduce inference costs by up to 10x.

- New architectures reduce the number of GPUs required for training by up to fourfold.

- Custom ASICs deliver 1.5x performance gains over legacy GPUs.

- Advanced memory technologies improve throughput and efficiency.

- GPUs outperform CPUs by more than 20x in neural network tasks.

- ASICs lead in performance per watt for fixed workloads.

- Edge SoCs reduce latency while preserving battery life.

- TPUs maintain high efficiency for large matrix operations.

- FPGAs enable configurable performance trade-offs.

- Benchmarks increasingly reflect real-world AI workloads.

Future Outlook for the Ecosystem Surrounding AI Chip Technology

- The AI chip market is forecast to surge from $31.6 billion today to around $846.8 billion by 2035, growing at a ~34.8% CAGR over the period.

- Overall, AI chip revenues are expected to reach about $315.7 billion by 2030, more than 2.5× the $126 billion recorded in 2023.

- AI chips for data centers and cloud are projected to hit about $453 billion by 2030, expanding at roughly 14% CAGR between 2025 and 2030.

- The edge AI chips market was about $2.47 billion in 2020 and is projected to climb to nearly $9.52 billion by 2027, reflecting rising on-device intelligence demand.

- CPU-based designs still dominate edge AI silicon, accounting for roughly 63–65% of edge AI chip revenue in 2023 due to their versatility in cloud‑native and distributed architectures.

- Leading-edge AI vendors such as NVIDIA, Qualcomm, Intel, Apple, and MediaTek collectively hold around 55% of the global edge AI chips market, anchoring the broader ecosystem.

- AI chip demand in data centers alone is expected to surpass $400 billion by 2030 as hyperscalers scale AI-first infrastructure and distributed cloud architectures.

- AI chips underpin a projected $1 trillion broader data center silicon opportunity by 2030, spanning GPUs, CPUs, DPUs, memory, and networking optimized for AI workloads.

- Governments and enterprises anticipate that AI will lift productivity, with about 64% of companies believing AI will significantly boost productivity, reinforcing long-term AI silicon investment.

Frequently Asked Questions (FAQs)

The global AI chip market is projected to grow from about $94.44 billion in 2025 to approximately $121.73 billion in 2026.

The AI chip market is expected to grow at a ~27.88% CAGR from 2026 to 2035.

Estimates project the AI chip market to reach around $293 billion by 2030.

The AI inference market is expected to grow from $106.15 billion in 2025 to about $254.98 billion by 2030, at a 19.2% CAGR.

Conclusion

The AI chip industry reflects a convergence of rapid technological progress, massive capital investment, and expanding real-world deployment. GPUs continue to anchor large-scale training, while ASICs, TPUs, and SoCs reshape inference and edge computing. Regional competition, startup innovation, and performance breakthroughs underscore the strategic importance of AI silicon.

As workloads distribute across cloud and edge environments, AI chips will remain central to computing innovation and economic growth well into the next decade.